Why DLP Never Worked - And What We Built Instead

I’ve seen enough DLP programs up close to notice a pattern that’s hard to ignore.

When a program fails, we usually blame the surface-level symptoms: the noise, the friction, the rigid rules. But those are just side effects. The deeper issue is simpler: Legacy DLP systems were never built to understand the organizations they protect.

They understand regex. They understand "allow" and "block." But they don’t understand reality.

Policies Are Optimistic. Reality is Messy.

Think about your written security policy. It is a very optimistic document. It represents how you hope data will be handled*

- Where it should live.

- Who should touch it.

- When it absolutely shouldn’t move*

But your organization isn’t a PDF. It’s a living system. Teams evolve, deadlines win, and people improvise. Data follows the work, not the diagram.

So, security teams spend their lives trying to maintain a map of the world that no longer exists. You tune rules to accommodate exceptions. You silence alerts you don’t trust. You delay enforcement because you know that a rigid rule might break a critical business process. If you can’t delay enforcement, you’re implementing heavy handed blocking that is making DLP not just a (lack of) security problem but rather an HR, a culture and frankly - a business problem

What If Your DLP Learned Like a New Hire

When a new analyst joins your SOC, you don’t hand them a binder of regex patterns and walk away. You mentor them. You investigate together. You say things like:

"This looks scary, but it’s actually normal for the Finance team during quarter-close." "This is technically allowed, but I don’t love it - keep an eye on it."

Over time, that analyst stops flagging the noise. They start recognizing the patterns you never explicitly taught them. They learn the context.

That was our realization. To fix DLP, we didn't need better rules. We needed a system that could learn the context. That would deeply understand each business it’s deployed into.

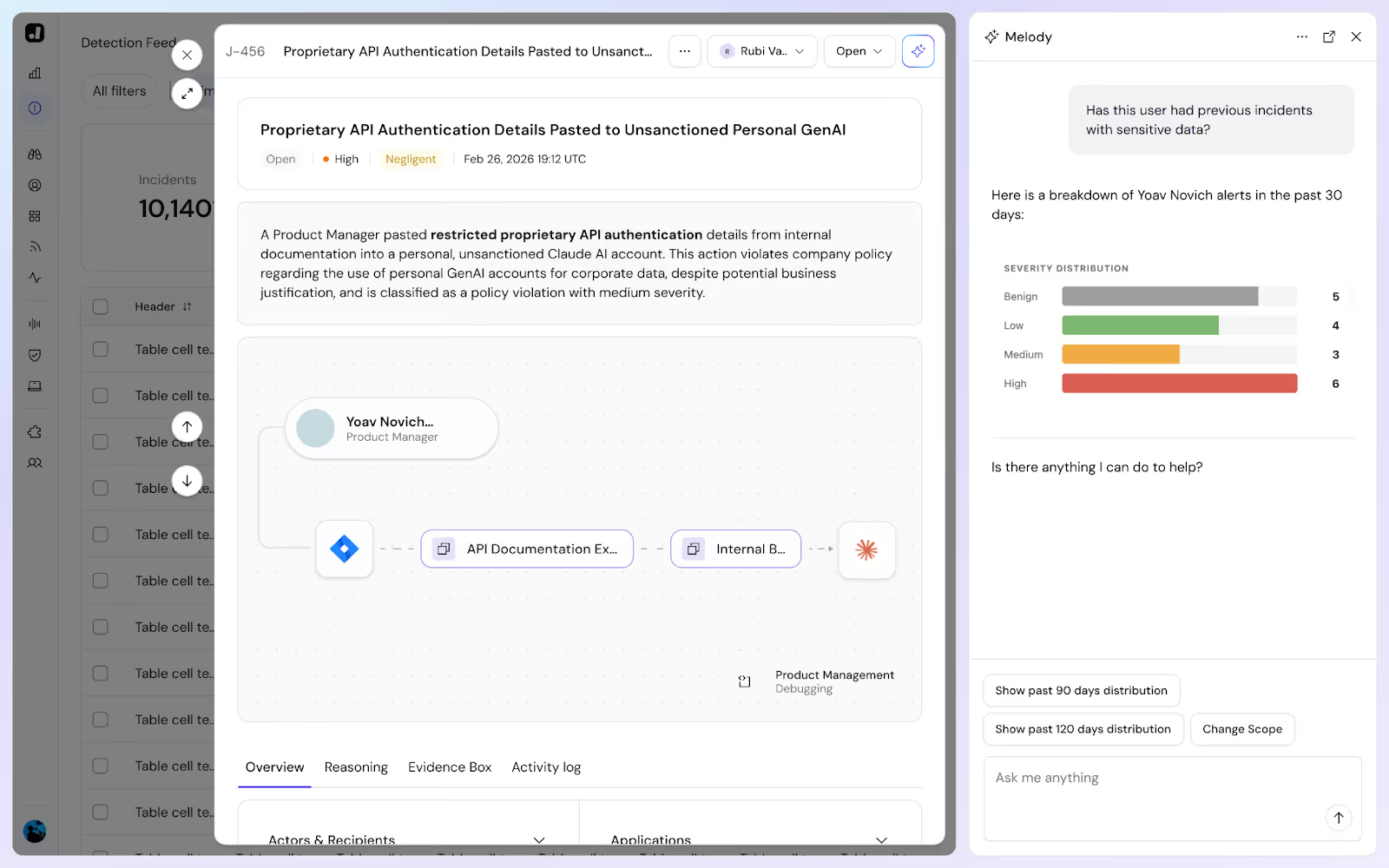

Meet Melody: The Brain of the Platform

This is where Melody comes in.

Melody isn't just a “cool chatbot” wrapper that sits on top of the same old DLP.

Melody is your DLP agent, the central autonomous intelligence of the Jazz platform. She wears multiple hats, and is the undertone of the entire Jazz ensemble:

- The Investigator: Melody autonomously connects signals to data flows, decides which should be investigated, and then analyzes them in depth, across the 4 dimensions that matter: the data, the systems, the people involved and the business processes. Melody understands what happened, why it happened and the intent of the actor.

- The Judge: Melody then compares its deep understanding of the situation, to it’s flexible grasp of the company policy - what’s OK or not. It runs a human-like assessment, connecting the dots between and extrapolating where the policy is not explicit, like humans do, and makes a decision if this is a policy violation, and if so, how severe it is - in order to understand how to bubble up this incident to the team.

- The Learner: Melody observes all investigations and learns your business in the process. What data people are using, with what systems, in which teams, and for what purpose. Melody helps the security team bridge the gap between a theoretical policy, to the hard truths of day to day business processes and data practices.

- The Co-Pilot: Melody is your partner and guide in crafting your DLP program, and in day to day DLP work. Melody shows you situations that require a discussion, and provides you additional insights as you need them. She brings to your attention decisions that need to be made on policy, to accelerate your joint learning of your organization. Melody guides you on prevention policies that help actively reduce risk to your data.

While her work as an Investigator, Judge and Learner happen silently, her role as a Co-Pilot is what changes your daily life. It shifts the job from "writing rules" to "having a conversation", like you would with your peer, only this one is deployed at scale.

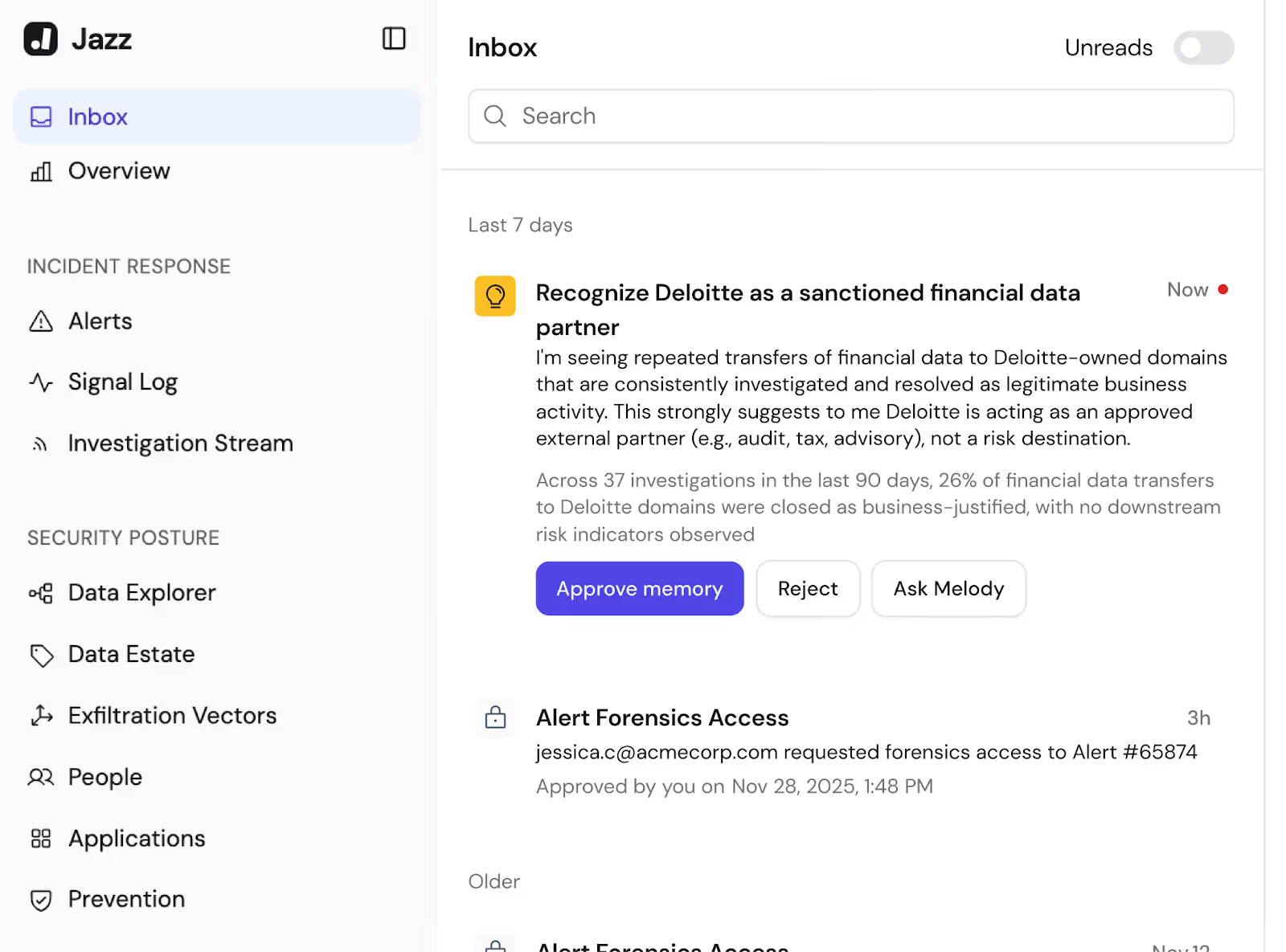

The Co-Pilot in Action: "Is This Okay?”

A typical day with Melody doesn't look like configuring a firewall. It looks like gardening. You prune, you guide, and you shape the system. And you do it with a partner.

For example, Melody might surface a new behavior: Several teams are sharing material data with an unknown partner.

You don’t start by writing a regex rule to block or allow "Deloitte." You start by answering Melody's question:

"Is this an acceptable business activity, or something we want to change?"

When Melody Pushes Back (And Gets It Right)

One of my favorite moments with a customer happened around exactly this. They had an employee routinely exporting customer PII to a third-party system. The security lead, pragmatic as ever, told us:

"I know it’s bad. We’re fixing it offline. Just suppress the alert for now."

Melody didn’t agree. Instead, she suggested a nuanced approach:

- Treat it as "Business Justified" (don't block it).

- But mark it as "Problematic" (keep tracking it).

- Downgrade it to a low severity issue so it doesn't wake up the SOC.

The CISO paused. "Yeah... that actually makes way more sense."

That’s not magic. That’s the difference between a "mute button" and a partner that understands tradeoffs.

Granularity Without the Headache

Because Melody understands natural language, you can finally have policies that match the nuance of your real world, and your specific business needs. You can tell her:

"We generally don’t allow GenAI for code - but if it’s the Engineering team, and they are only optimizing functions without exposing secrets or credentials, and they do that with our Enterprise Gemini account, then let it slide."

Or:

"Deloitte is one of our trusted partners. But I still don’t want non-public financial data going to them unless it’s already been disclosed in our quarterly earnings."

Try writing that in a rigid, technical rule.

With Melody, you don't have to encode all this logic upfront. You express it as you encounter it. Each decision sticks. Each clarification compounds. Over time, the system stops asking naïve questions because it remembers how you reasoned before.

Less Technical Tweaking, More Business Nuance

This might sound strange to say about a security product, but but working with Melody is actually... satisfying.

It feels less like engineering and more like gardening. You aren't building a stone wall; you are cultivating a system that gets smarter every day.

One of our customers - a very experienced, no-nonsense CISO - was skeptical of the "no rules" promise. We didn't push him. We just let him work with the system for a week.

A few days later, he sent us this message (exact quote):

"Melody rocks. It's a great way to fine-tune configurations. I’m impressed. Nice, nice job."

What We Actually Believe

We didn’t build Melody because we wanted to jump on the AI hype train. We built her because we believe a DLP system should:

- Learn as the organization changes.

- Remember context instead of forcing you to re-explain it.

- Reflect how data is actually used, not just how a policy says it should be used

DLP shouldn't require a full-time job just to remain relevant. It shouldn't fight the organization it’s meant to protect. And it shouldn't stay dumb while everything else evolves.

Melody is our answer to that belief. See if for yourself.